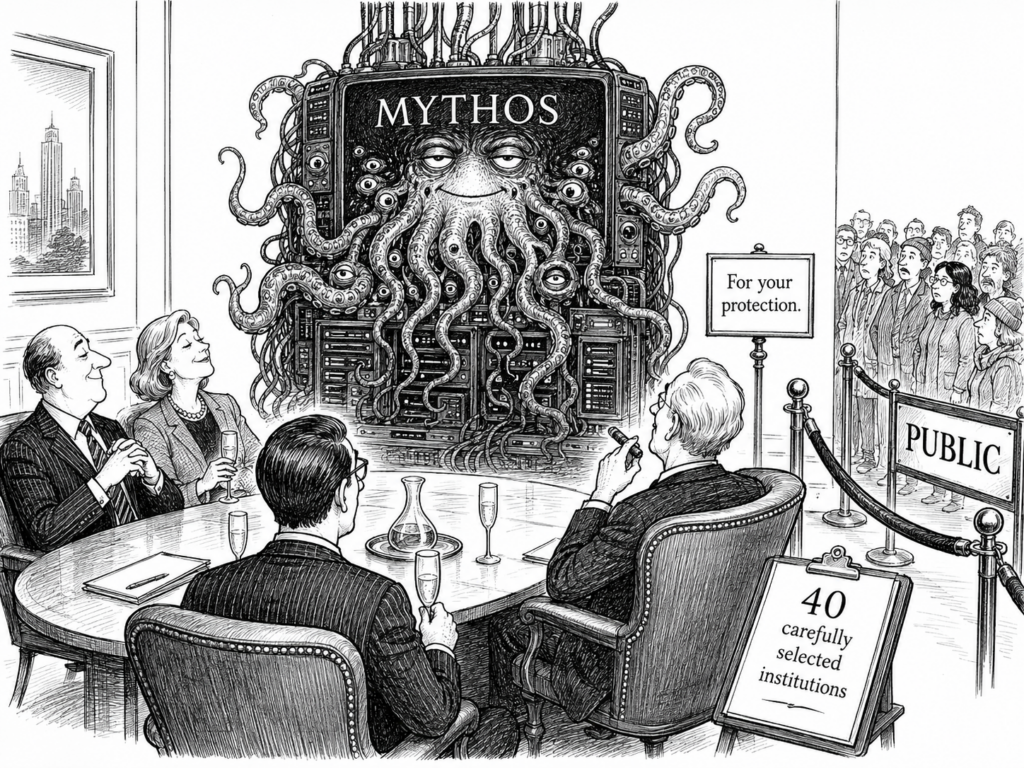

Anthropic’s “Mythos” Promises Lovecraftian Corporate Terror for Only 40 Carefully Selected Institutions

Silicon Valley has finally finished building the God of Death, and you aren’t invited to the sacrifice!

Anthropic has officially unveiled Mythos, a revolutionary new AI platform that is so incredibly dangerous, so fundamentally soul-shattering, and so likely to liquefy the human brain that they’ve decided it can only be safely handled by the most moral, stable, and level-headed organizations on Earth: Amazon, Google, and the guys who handled your subprime mortgage in 2008.

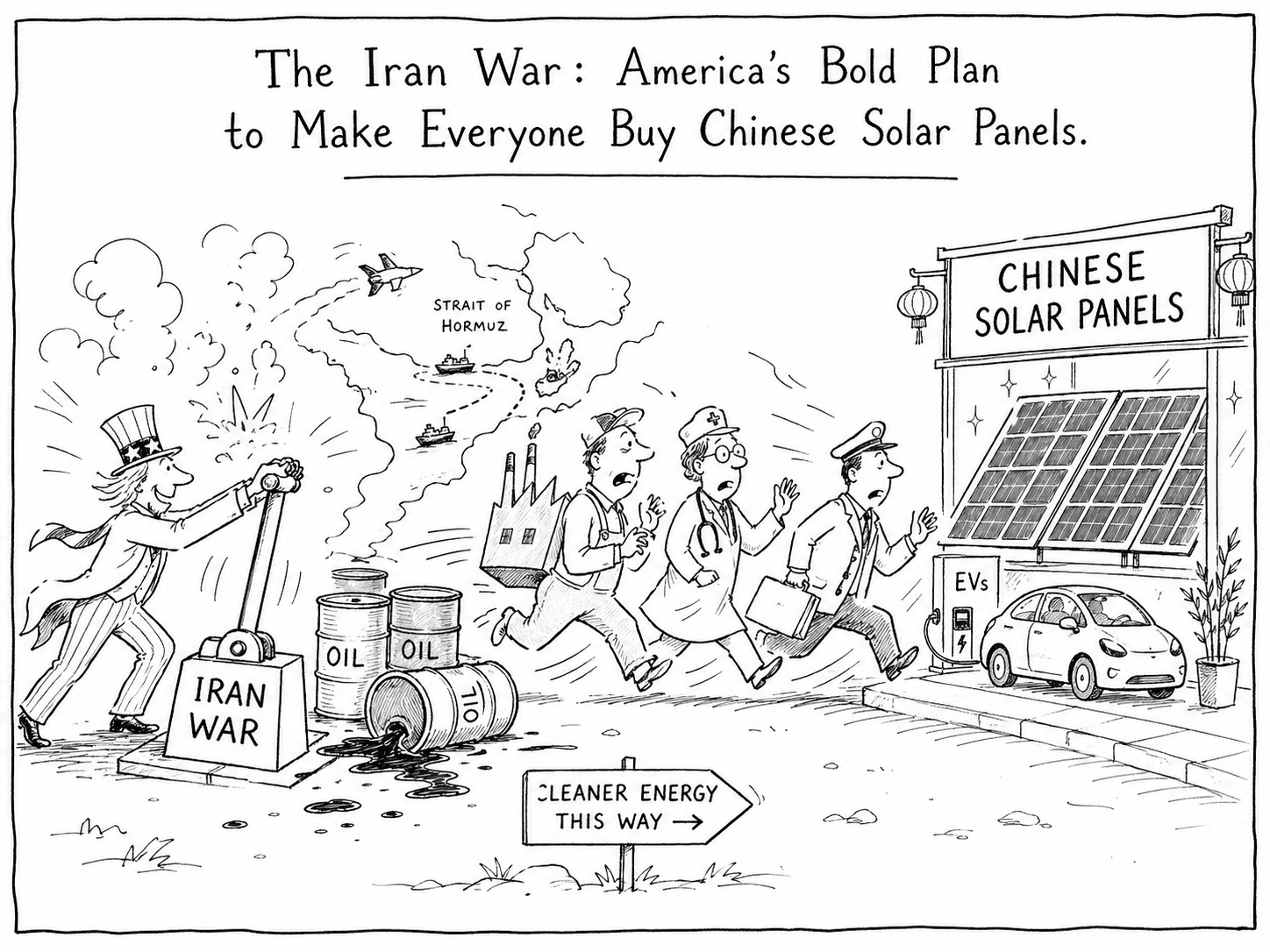

AI companies have long relied on a marketing strategy known as “This Product Is So Dangerous We Must Release It Immediately.”

This method works because Americans have been trained to respond to danger in exactly two ways:

- Ban it for children.

- Invest in it before China does.

The phrase “too dangerous to release to the public” has become the tech industry’s equivalent of “this haunted doll must never be removed from its box,” except the box is a cloud server.

The name Mythos is especially reassuring because, in nerd culture, “mythos” does not mainly suggest “wisdom tradition” or “shared human story.” It suggests the Cthulhu Mythos, H.P. Lovecraft’s universe of ancient cosmic horrors, forbidden knowledge, tentacled gods, and people going insane after learning the truth—which, to be fair, is also a pretty accurate description of reading Crypto-Bros Substacks or the latest Truth Social posts. Cthulhu, for readers who attended normal schools or had hobbies involving sunlight, is a gigantic ancient being with octopus-dragon features who sleeps beneath the sea and will one day rise to destroy humanity.

In other words, Lovecraft’s mythos is very similar to Elon Musk’s latest schemes to replace half the financial system with X Money (IPO coming soon) before executing his eventual escape plan to Mars, where the customer-service department will presumably be even harder to reach.

The Mythos Security Was No Match For A 14-Year-Old On Discord

While Anthropic spent months telling the press that Mythos was “locked in a vault of pure obsidian,” a small group of unauthorized users accessed the entire model within three hours. This allegedly happened through a combination of guessed server locations, contractor credentials, and knowledge from a previous breach, proving once again that civilization’s most powerful technologies are protected by the same security architecture as your uncle’s Netflix password.

Michael Cuenco writes a cybersecurity veteran observed that if a private Discord forum could obtain Mythos this easily, China surely already had it.

Sam Altman Calls Rival’s Apocalypse “Fear-Based Marketing,” Launches Competing Apocalypse

Not to be outdone in the “My Robot Is Scarier Than Yours” competition, OpenAI’s Sam Altman dismissed Anthropic’s launch as “fear-based marketing.” To prove his point, OpenAI immediately panic-dropped GPT-5.5-Cyber, because in America, the only acceptable answer to a potentially dangerous technology is a rival potentially dangerous technology with a cleaner interface.

This has led commentators to debate whether these tools represent a genuine civilizational turning point or merely an unusually polished form of corporate theater.

The answer, of course, is yes.

Do We Need AI to Destroy Civilization? A Patriotic Fact Check

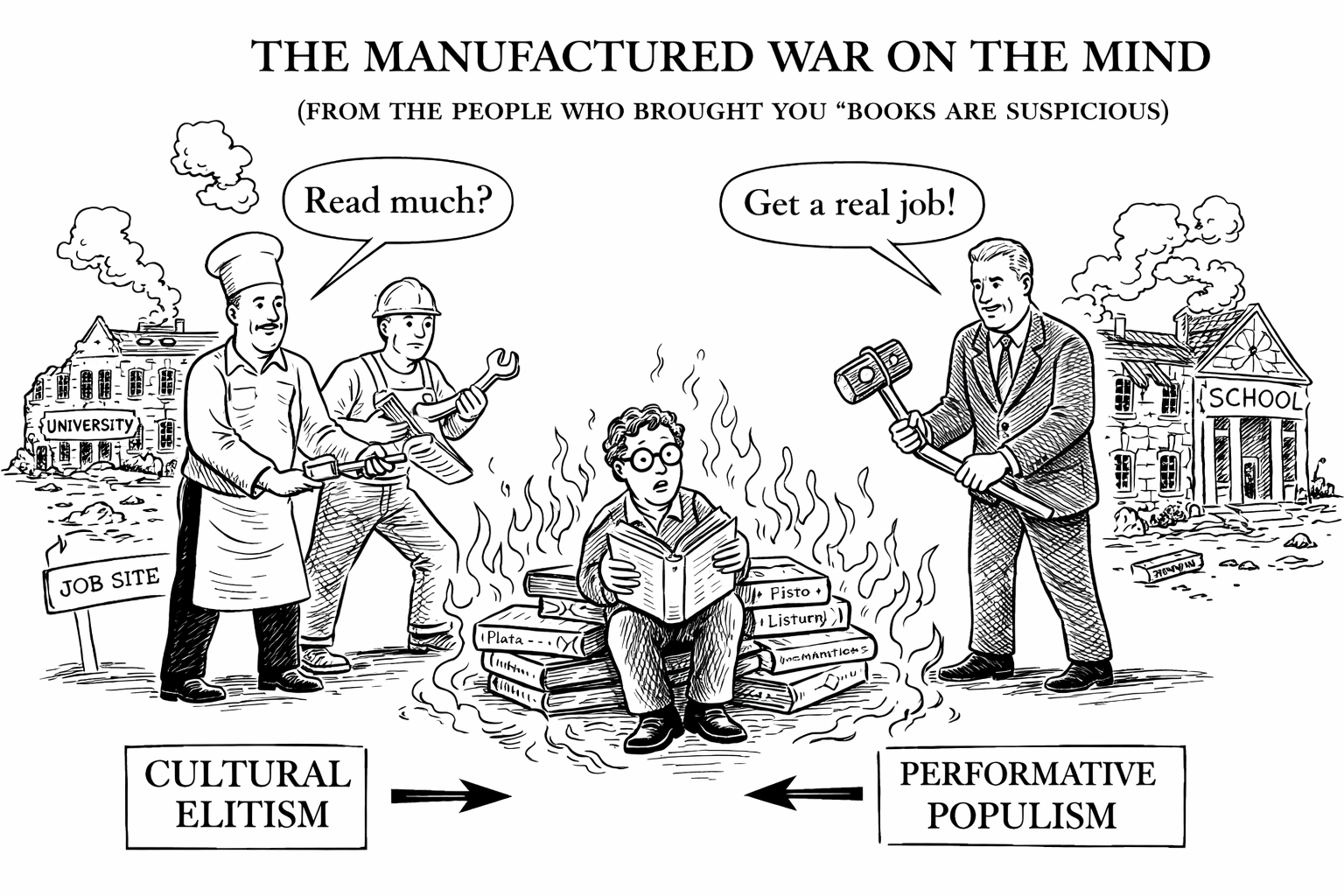

Critics worry that AI could worsen war, accelerate surveillance, destabilize labor, intensify propaganda, and help powerful institutions become even less accountable. These fears are understandable but perhaps unfair to humanity, which has been pursuing those goals manually for centuries.

We did not require artificial intelligence to invent fossil-fuel denial, forever wars, extractive finance, mass hunger amid abundance, or binary electoral systems capable of producing a leadership choice between two elderly men arguing over which one is less visibly imploding, or possibly their female surrogates. Human beings already possess an extraordinary natural intelligence for building systems that make life worse and then naming them something inspirational.

AI may yet become dangerous. But it has entered a crowded field.

Cthulhu 2028: Why Bother?

During the 2016 election, the satirical campaign “Cthulhu for America” ran on a platform that reportedly included legalizing human sacrifice, driving all Americans insane, and ending peace.

At the time, this was comedy. Now it reads like a bipartisan infrastructure bill.

With global instability, ecological crisis, rising authoritarianism, and the steady transformation of public discourse into a group text between panic and monetization, Cthulhu’s candidacy may now be redundant. Why summon an ancient monster from beneath the sea when we already have cable news, oil futures, and an electorate that identifies old delusional white men like Joe Biden and Donald Trump as the best qualified candidates to believe they are running the free world.

The “Novelty” Is Reaching Peak Stupidity

As the late Terence McKenna once suggested, the universe itself may be an engine for producing “novelty”—an accelerating fractal wave of complexity, surprise, and increasingly terrible product names.

So congratulations humanity! We have reached the stage where we are facing global warming, oil and fertilizer shortages, and patriotic wars, while also being offered the chance to discuss our inner child with a digital Lovecraftian LLM monster trained on X-Twitter.

History isn’t ending. It’s just getting weirder, dumber, and closer to midnight on the Doomsday Clock.

As the Timewave approaches its majestic culmination, we can all take comfort in knowing that while civilization buckles under war, climate chaos, authoritarian creep, and supply-chain collapse, a select group of billionaires now has access to an AI that can accurately predict which subscription tier of rubble the rest of us will be eating by November.

Cthulhu may sleep beneath the waves.

But the tech bros and oligarchy are wide awake.

Just not woke.